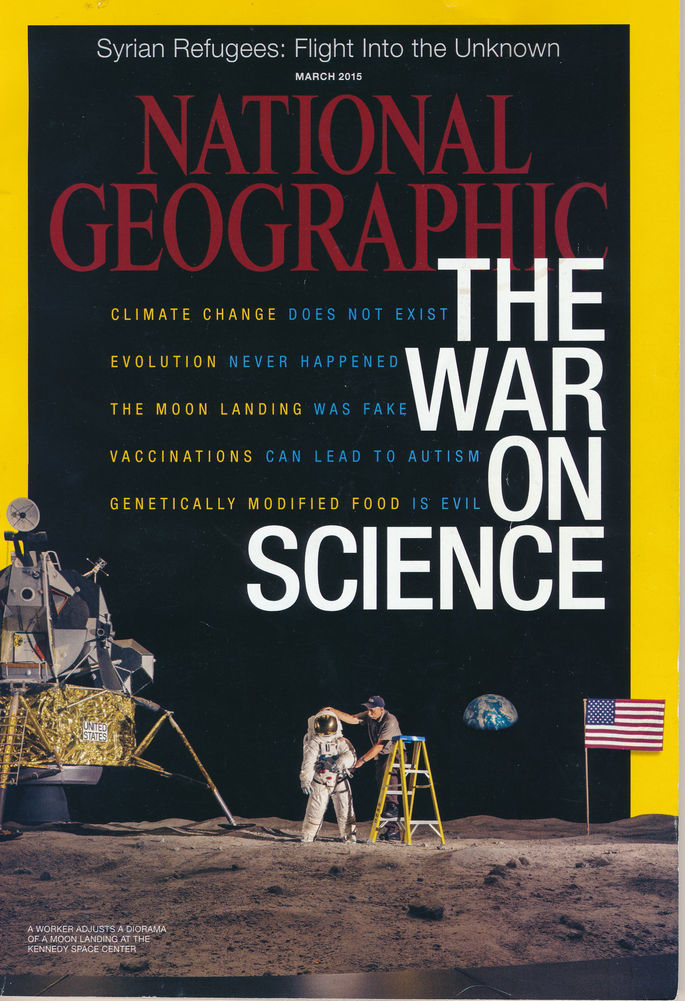

Editorial Note: This post is from John Horgan who writes for Scientific American. The original is here. There is one change – the image used. JH’s posts are always worth reading. In this he takes on a worrying trend to regard science as sacrosanct. A recent front cover for National Geographic brought this home to me. To question whether vaccines might cause autism it seems is pretty well to be a Flat Earther. This has clear and worrying implications for me, for RxISK and for anyone who has ever been injured by a drug.

Years ago I was blathering to a science-writing class at Columbia Journalism School about the complexities of covering psychiatric drugs when a student, who as I recall had a medical degree, raised his hand. He said he didn’t understand what the big deal was; I should just report “the facts” that drug researchers reported in peer-reviewed journals.

I was so flabbergasted by his naivete that I just stared at him, trying to figure out how to respond politely. I had a similar reaction when I spotted the headline of a recent essay by journalist Chris Mooney: “This Is Why You Have No Business Challenging Scientific Experts.”

(Journalist Chris Mooney argues that the views of anti-vaccine activist Jenny McCarthy can be dismissed because she is not a “scientific expert,” but by this logic the views of journalists like Mooney should also be discounted).

Mooney is distressed, rightly so, that many people reject the scientific consensus on human-induced global-warming, the safety of vaccines, the viral cause of AIDS, the evolution of species. But Mooney’s proposed solution, which calls for non-scientists to yield to the opinion of “experts,” is far too drastic.

In support of his position, Mooney cites Are We All Scientific Experts Now?, a book by sociologist of science Harry Collins. Rejecting the hard-core postmodern view of science as just one of many modes of knowledge, Collins argues that scientific expertise is uniquely authoritative. Here’s how Mooney puts it:

“Collins carefully delineates between different types of claims to knowledge. And in the process, he rescues the idea that there’s something very special about being a member of an expert, scientific community, which cannot be duplicated by people like vaccine critic Jenny McCarthy… Read all the online stuff you want, Collins argues—or even read the professional scientific literature from the perspective of an outsider or amateur. You’ll absorb a lot of information, but you’ll still never have what he terms ‘interactional expertise,’ which is the sort of expertise developed by getting to know a community of scientists intimately, and getting a feeling for what they think. ‘If you get your information only from the journals, you can’t tell whether a paper is being taken seriously by the scientific community or not,’ says Collins. ‘You cannot get a good picture of what is going on in science from the literature,’ he continues. And of course, biased and ideological Internet commentaries on that literature are more dangerous still. That’s why we can’t listen to climate change skeptics or creationists. It’s why vaccine deniers don’t have a leg to stand on.”

Mooney is hardly the only person insisting “You Have No Business Challenging Scientific Experts.” Versions of this assertion constantly pop up in debates over hot-button scientific issues. Defenders of supposedly canonical views of global-warming, genetically modified foods and vaccines dismiss non-expert dissidents. Just last week, a friend and fellow journalist mocked meteorologists who doubt climate change–because they’re meteorologists, not climate scientists.

The irony is that the “No Business Challenging Scientific Experts” argument applies not only to activists like Jenny McCarthy but also to journalists like Mooney and me. After all, we journalists are “outsiders” and “amateurs,” especially compared to the scientists whose work we cover, so how dare we second-guess them?

I agree with Mooney and Collins on some fundamental issues. I’m not a Kuhn-style postmodernist, the kind who puts scare quotes around “truth” and “knowledge.” Science is a uniquely potent method for discovering how nature works, and it gets some things right, once and for all: the atomic theory of matter, the (basic) big bang theory, evolution by natural selection, DNA-based genetics.

Also, I give great weight to consensus and credentials, which provide a fast and dirty way to decide whether a claim should be taken seriously. One of the reasons I doubted that “cold fusion” had been achieved in the late 1980s was that scientists claiming to have observed room-temperature fusion tended to be at second-rate institutions; scientists at top-tier institutions could not replicate the results.

But the history of science suggests—and my own 32 years of experience reporting confirms—that even the most accomplished scientists at the most prestigious institutions often make claims that turn out to be erroneous or exaggerated.

Scientists succumb to groupthink, political pressures and other pitfalls. More than a half century ago, Freudian psychoanalysis was a dominant theory of and therapy for mental disorders. The new consensus is that mental illnesses are chemical disorders that need to be chemically treated.

This paradigm shift says more about the financial clout of the pharmaceutical industry–and its control over the conduct and publishing of clinical trials–than it does about the actual merits of antidepressants and other drugs. That’s why I was so stunned when that Columbia student said peer-reviewed “facts” could speak for themselves.

Here’s another example related to the work of Harry Collins, who inspired Mooney’s column. Collins’s respect for scientific expertise stems in part from his decade-long immersion in the field of gravitational-wave studies. Gravitational waves made headlines a year ago, when astrophysicists overseeing an experiment called Background Imaging of Cosmic Extragalactic Polarization 2 announced they had discovered the “first direct evidence” of inflation, a 35-year-old theory of cosmic creation. According to the group, gravitational waves triggered by inflation had distorted the big bang’s microwave afterglow in measurable ways.

No less an authority than Stephen Hawking declared that the BICEP2 results represented a “confirmation of inflation.” I nonetheless second-guessed Hawking and the BICEP2 experts, reiterating my long-standing doubts about inflation. Guess what? Hawking and the BICEP2 team turned out to be wrong.

I’m not bragging. Okay, maybe I am, a little. But my point is that I was doing what journalists are supposed to do: question claims even if–especially if—they come from authoritative sources. A journalist who doesn’t do that isn’t a journalist. He’s a public-relations flak, helping scientists peddle their products.

And it’s precisely because we journalists are “outsiders” that we can sometimes judge a field more objectively than insiders. Mooney surely agrees with me on this. There is an enormous contradiction buried within his “No Business Challenging Scientific Experts” argument. He obviously doesn’t want us to yield to every scientific consensus, only to those that he, Mooney, deems credible.

Google is reportedly working on algorithms for evaluating the credibility of websites based on their factual content. But there will never be a foolproof way to determine a priori whether a given scientific consensus is correct or not. You have to do the hard work of digging into it and weighing its pros and cons. And anybody can do that, including me, Mooney and even Jenny McCarthy.

By the way, I think McCarthy grossly overstates the dangers of vaccines–I’m glad my kids got vaccinated–but I, too, have concerns about some vaccines.

Editorial Note: On the vaccine issue, here is a recent article of interest on Vaccine Assay Secrecy by Matthew Herder and Colleagues – Herder Vaccine assay secrecy.

Everyone has the right to challenge “scientific experts” II

(This is a follow up post from John Horgan on the topic – the original is here.)

I recently knocked science journalist Chris Mooney for asserting that “You Have No Business Challenging Scientific Experts.” Non-experts have the right and even the duty, I retorted, to question scientific experts, who often get things wrong.

Far from reconsidering his stance, Mooney doubles down on it in a Washington Post column, “The science of why you really should listen to science and experts,” that defends not just scientific experts but experts in general. Mooney ends up not boosting experts’ credibility but undermining his own.

He cites a study that found that judges and other lawyers show less ideological bias—or “identity-protective cognition”–in their application of the law than law students and lay people. Titled “Ideology’ or ‘Situation Sense’? An Experimental Investigation of Motivated Reasoning and Professional Judgment,” the study was carried out by Yale law and psychology professor Dan Kahan and five other scholars.

To my mind, the study merely shows that lawyers and judges know the law better than law students and non-lawyers. That’s reassuring, but surely it does not mean we should always trust lawyers’ legal advice, especially since lawyers so often disagree on interpretations of the law. Consider the rancor of recent debates on health care, immigration, taxes, the environment and other issues in Washington, where more than one third of current Representatives and one half of Senators have law degrees, according to The National Law Journal.

Mooney nonetheless insists that the Kahan study “fits nicely alongside a growing trend toward robustly defending and reaffirming the importance of experts.” As an example of this trend, he cites the 2005 book Expert Political Judgment by political psychologist Philip Tetlock.

Mooney’s citation of Tetlock is bizarre, because Expert Political Judgment—far from a defense of experts—is a devastating critique of them. Tetlock reports on his long-term study of 284 professional pundits, including academics, government officials and journalists, who comment on politics and related issues in scholarly journals and conferences and via mass media. Over two decades, Tetlock recorded some 28,000 predictions by the experts related to wars, elections, economic collapses and other events.

In a terrific 2005 review, “Everybody’s An Expert,” New Yorker writer Louis Menand summarizes Tetlock’s conclusions as follows:

“people who make prediction their business—people who appear as experts on television, get quoted in newspaper articles, advise governments and businesses, and participate in punditry roundtables—are no better than the rest of us. When they’re wrong, they’re rarely held accountable, and they rarely admit it, either. They insist that they were just off on timing, or blindsided by an improbable event, or almost right, or wrong for the right reasons. They have the same repertoire of self-justifications that everyone has, and are no more inclined than anyone else to revise their beliefs about the way the world works, or ought to work, just because they made a mistake. No one is paying you for your gratuitous opinions about other people, but the experts are being paid, and Tetlock claims that the better known and more frequently quoted they are, the less reliable their guesses about the future are likely to be. The accuracy of an expert’s predictions actually has an inverse relationship to his or her self-confidence, renown, and, beyond a certain point, depth of knowledge. People who follow current events by reading the papers and newsmagazines regularly can guess what is likely to happen about as accurately as the specialists whom the papers quote.”

Menand’s review is loaded with gleeful one-liners, including this one: “Human beings who spend their lives studying the state of the world, in other words, are poorer forecasters than dart-throwing monkeys.” And yet this is no laughing matter. Consider how “experts” in the government, academia and media helped enable the catastrophic U.S. wars in Afghanistan and Iraq and the economic collapse of 2008. Example: New York Times columnist Thomas Friedman, who just before the U.S. invasion of Iraq expressed the hope that it would lead to “a more accountable, progressive and democratizing regime.”

How can Mooney possibly interpret Tetlock’s book as a defense of experts? Here’s how. He seizes on Tetlock’s finding that some experts were better forecasters than others. They tended to be not what Tetlock calls “hedgehogs,” who explain the world in terms of one big unified theory, but “foxes.” Foxes, Tetlock explains, “are skeptical of grand schemes,” and “diffident about their own forecasting prowess.”

In other words, the most credible experts are those who, implicitly, warn us to be wary of experts. Mooney is oblivious to this irony. “So experts really do exist,” he blithely concludes, “and they really are different from non-experts. Now, all we have to do is listen to them.”

I prefer Menand’s conclusion. He writes that “the best lesson of Tetlock’s book may be the one that he seems most reluctant to draw: Think for yourself.”

Addendum: Listen to me talk about the need to challenge experts on New Hampshire Public Radio

From Corey Powell, old friend, distinguished science writer, former editor-in-chief of Discover, whom I quote dissing meteorologists above:

Just to be clear–my argument wasn’t that meteorologists lack the ability (or worse, the right) to challenge climate studies. My argument is that they suffer from a false sense of expertise that makes them think they can speak with authority without bothering to really understand the other field. It’s a lazy kind of arrogance. This often happens when scientists get taken with their own brilliance and think, hey, I know about genes, I’ll bet I really understand consciousness or solar energy or alien life or whatever. Of course almost everybody with a healthy ego does this to some extent, fantasizing that they are experts at something they know nothing about. But with the meteorologists (as a case study) there is a more specific type of confusion, and a more specific type of unearned claim to authority. I liked our quick Twitter exchange about the role of curiosity vs. gall. Both of them are about questioning everything, including expert testimony & peer-reviewed truths. I take a bit longer to reach full boil when I come across BS, but I get there eventually.

From Matthew C. Nisbet, Associate Professor of Communication Studies, Northeastern University:

In American political culture, liberal commentators and advocacy journalists tend to put scientists on a sacred pedestal and are often funded to do so and attract audiences by doing so.

Climate scientists especially are not only portrayed as innocent priests and powerful seers but also as vulnerable martyrs that must be protected and defended against any criticism, even when such criticism comes from social scientists or specialist journalists who are speaking from the perspective of their own expertise.

On complex, wicked problems like climate change, the only way we identify paths forward and opportunities for political cooperation is through healthy disagreement. Criticism helps widen the menu of options that might be pursued and calls attention to faulty assumptions.

Another example of the need for informed criticism of experts, as I discussed in a recent co-authored paper, is the work of the journalist Gary Taubes who helped spur scientists to reconsider their assumptions about the linkages between diet, obesity, and other negative health outcomes.

From David Gorski, physician and blogger at “Science-Based Medicine”:

Gorski critiques my column in a post titled “On the ‘right’ to challenge a medical or scientific consensus.” Like Mooney–and like me!–Gorski has concerns about the propagation of pseudoscience, but his piece is one long exercise in begging the question. That is, he implicitly assumes what he is attempting to argue. He writes: “It’s… important to remember that there are scientific consensuses and then there are scientific consensuses. What I mean is that some consensuses are stronger than others, something Horgan seems to ignore or downplay.” The primary point of my piece is that there is often no way to know whether a consensus is legitimate or not; scientists often claim more certainty than is merited, and hence outsiders are justified in questioning scientists’ proclamations. Gorski’s own field, oncology, offers an excellent example of this problem. For decades, the consensus was that cancer should be combatted with frequent testing and aggressive treatment, but now that consensus is unraveling. “In the end,” Gorski concludes, what Horgan seems to be arguing is that we should take pseudo-expertise seriously.” Actually, I am arguing that the public should be wary not only of pseudoscientific charlatans peddling homeopathy and “energy healing” (Gorski’s examples) but also of genuine experts like Gorski.

See also my next post, “Sociologist Steve Fuller: Scientists Aren’t More Rational Than the Rest of Us”; and my Bloggingheads.tv chat with anonymous blogger “Neuroskeptic,” an expert who displays admirable skepticism toward his own field.

Further Reading:

There some interesting points here but it largely mistakes the issue. Crucially, in the context, Jenny McCarthy is not, was never, an anti-vaccinationist – she was a celebrity mother who witnessed vaccine injury to her child and became a campaigner for vaccine safety (actually she has been more or less forced into silence on the matter for several years). It has been part of the gambit of government and industry to characterise parents campaigning for vaccine safety as “anti-vaxxers” when for the most part they were parents or grandparents who had witnessed damage to their children from products they had been persuaded to use: it is true that recently the mistrust between such parents and “public health” has become so great that many have become radicalised into anti-vaccinationists, but it is not where it started and not what Jenny McCarthy is.

And of course this is not for the most part about science at all but about denying damage. If Jenny wants to talk about what happened to her kid they will make it very difficult for her to work (hate material will appear in Time magazine, even NY Times and Washington Post reminding people shock-horror that she was a Playboy Centrefold)). And what people like Mooney, Mnookin and Gorski (Goldacre too) are doing is ad-hominem with bells on. This is not about hard headed science at all, it is about making people shut up, and marginalising them socially and professionally: it is about skewing the data by socially repressive techniques (even if some of the participants are too stupid to realise what they are doing). On the Sense About Science website there used to be an article about the necessity of driving people talking about vaccine damage out of the mainstream media (a project in which they have long since succeeded).

And what we are talking about is not like physics at all (or the bits of physics that have stood the test of time): there is no central unchanging law of human imunity which underpins the project – there are only industrial products injected or sometimes swallowed, which may not be as effective or safe as the manufacturers would have us believe, and the evidence is usually of a statistical kind which can be distorted or lied about (there would be an unending supply of documentable examples), while the bodies that license and prescribe them are in bed with the industry. It is quite true that it should not need a scientist to penetrate this farago: any competent investigative journalist could do it.

Meanwhile, it is kind of obvious that vaccines can cause encephalopathies and other types of organic damage (to the gut for example) and that insufficient care is taken. These are the cruise missiles and drone helicopters of the war on the diseases, billions of them are deployed each year and the people in charge don’t want to know about the collateral damage. If you actually care about science the data in vaccinology is let’s face it mostly junk.

http://www.ageofautism.com/2015/02/a-reminder-dr-julie-gerberding-.html

http://www.ageofautism.com/2015/01/the-washington-post-whips-up-fear-and-blames-andrew-wakefield.html

http://www.ageofautism.com/2014/07/best-of-aofa-naked-cdc-truth-about-mmr.html

Reminds me of the dark ages when we had no right to challenge the men in dresses.

John Stone, it should be noted, is one of the last admirers of Andrew Wakefield, a well known fraud, who suggested that MMR caused autism. And as for Jenny McCarthy’s position: correletion and causation are not the same thing.

No, I have never had any difficulty defending Andrew Wakefield – a great many lies were told about him – and it seemed the honourable thing to do. Mostly, when people lie they are covering up something. This may help:

http://www.ageofautism.com/2015/01/upworthy-lies-about-the-wakefield-lancet-paper.html

Unfortunately, hit and run is the game of the pharmaceutical industry: they don’t like acknowledging damage and with vaccines they have really got it made. Deidre does not like acknowledging damage either as we saw on an earlier blog https://davidhealy.org/pharmaceutical-rape/ .

Pharma apologists are always fond of reciting the “correlation does not equal causation” mantra but it is not very consistent since their usual recourse is to negative statistical findings (frequently in demonstrably fraudulent studies such as those by Masden and De Stefano). Normally speaking the physician’s job is to take patient histories but when they involve potential vaccine damage they just seem to disappear. I think this is possibly why the Wakefield Lancet paper was so critical in this story because it recorded the possible adverse effects of a vaccine. And we know from a High Court judgment that there was no fault with the recording (and in fact Wakefield had nothing to do with that aspect of the data).

Some more on the lies that have been told about Wakefield here:

http://www.ageofautism.com/2011/12/bbc-trustees-stand-by-groundless-insinuations-against-andrew-wakefield-in-.html

It seems.at present, that after thousands of years, of intense learning, search and research, Humanity learned very little, and gained less, in the science and wisdom of life. Today, looking at the achievements of digital technology,

computers et al.I feel like screaming: “Back to Basic!” Today, when we are able to reach for the moon and beyond, we even do not talk to each other. Today when we are brimming with weapons of mass destruction, we still do not have a pill of mass protection. We still do not know the difference between a Drug and Vitamin

It is not that we do not know, but we refuse to admit to it,why?for the reason of Greed.Today when so many wars are fought on the face of earth, we forget and ignor:The War between the Medicines. It is Allopathic vs. Integrative

Complementary Medicine. It is Homeopathic vs. Naturopathic Medicine.Ayurvedic

vs. Scientology.etc.. etc. etc. The list is endless. Is there any hope in the horizon? Yes,How? Activate the innate immunity in real time. Praise the HDL.KISS the Niacin KISS= Kiss It Simple Stu…(HDL-High Density Lipoprotein).Above all: SLEEP RIGHT! Why? Because when you wake up, You Will Have a Dream!!!

On the apparent nature of “expertise” I am obliged to give a couple of personal anecdotes that remind me of the apparent but false existence of some forms of being presented as an “expert” (a term I despise). In every edition, the New England Journal of Medicine publishes an image quiz, usually of a rare condition with a choice of five diagnoses. Although many, if not most, are completely outside my area of medical “expertise” I have a ridiculously high score in getting them correct. How? It’s very simple. In my day, when we were still painting ourselves blue in Scotland, we were obliged to learn Latin and Greek and, in fact, these were required for entry to medical school. A glance at the photograph with a recognition of the Greek or Latin root of ne of the choices and voila! the correct “diagnosis”. The “expertise” if there is such, is in remembering two ancient languages.

Some years ago, when I was teaching a seminar at a major Canadian university, I believed it my responsibility to breed the ability to critique any and all peer-reviewed journal articles and discard those found failing to reach appropriate standards in subject selection, methods, statistics used etc. I later received a complaint from the head of the department that the students involved in my seminars had become “so critical, it was difficult to get them to accept anything as truth.” The naive young man at Columbia was probably a product of an educational system in which I find that criticism/critiquing is actually discouraged and that if it is in print, it must be true.

David, by the logic of your article, nobody can ever know anything with clarity. That’s simply foolishness dressed up in outwardly respectable journalistic clothing. But ultimately, one of the core principles of science is our best guide to what is true versus mere speculation. Reported results should replicate when the experiment is re-performed by independent investigators. That principle laid to rest the nonsense concerning cold fusion. In the case of vaccines, the original article which caused most of the so-called “controversy” didn’t meet that standard and had to be withdrawn.

Observed reality is that NOTHING is perfectly safe. But vaccines are rigorously evaluated for safety — and a refusal to have kids vaccinated is directly associated with the persistence of diseases that kill or create long-term harms. Refusal to vaccinate has measurable consequences. It is not at all clear to me that it has measurable benefits in lowered medical risk.

Science isn’t always practiced well or honestly by those who lay claim to status by use of titles like Ph.D. But in a world where many people still believe in angels and sky gods, science is a far superior framework for testing and understanding truth. And journalists are just as prone to exaggeration and created controversy as the scientists whom they attack.

Sincerely,

Richard A. Lawhern, Ph.D.

Senior Systems Engineer (Retired)

and advocate for chronic neurological face pain patients.

Since when did anyone reporting the adverse effect of a vaccine get a fair hearing? In the UK there hasn’t been a single Vaccine Damage Payment Unit award since Robert Fletcher’s in 2010 and that took 18 years of arguing. The spontaneous acknowledgement of vaccine damage by the medical profession or government is almost unknown.

I have made my points about the Wakefield paper above.

On the subject of vaccination, things are very dire in Australia, with a continued push towards compulsory vaccination, with no exemptions, see for example this recent article: “Anti-vaccination parents could be refused government benefits” http://www.abc.net.au/pm/content/2015/s4211991.htm

It seems anybody who questions vaccination in any way is branded ‘anti-vaccination’. As citizens we are entitled to ask questions, particularly about the growing number of vaccine products which are being mandated in our society. Instead we are supposed to meekly accept the edicts of often conflicted industry-funded ‘experts’. It’s time for transparency and accountability.

This is very serious because there are some very questionable vaccines on the current schedule, and parents are being forced to have these vaccinations for their children, without valid ‘informed consent’.

Peer review does throw up problems. I am one of the editors of a literary journal which peer reviews. Some time ago one article received a very warm response from the first reviewer and the reverse from the second (who, in effect, said ‘bin it’). The third offered a way out in that she suggested that my journal was not the right place for this article and suggested another. Honour was saved.

In science there can be considerable problems. The late John Maddox, the former editor of ‘Nature’, told me after a few drinks that he had skipped peer review for one article which he published, because he thought it good , but feared that the reviewers would not agree with him. No names were mentioned.

I had to check the national geographic website to make sure that cover hadn’t been Photoshopped. It just seemed too ludicrous to be real. From a marketing point of view it’s quite clever, but the message is incredibly stupid at the same time.

I see this as directly aimed at someone like me. A graphic designer who dares question science…the audacity!

The thing that Richard appears to have missed is that it’s directed at him too. This is about everyone having the right to challenge scientific experts and that would include the right of a systems analyst to challenge psychiatry. A P.h.D in a completely unrelated field does not give you a free pass under Mooney’s line of thinking.

https://davidhealy.org/somatic-symptom-disorder/

Had this designer not challenged the experts, his wife would likely at best been committed to mental hospital and at worst have committed suicide (actually it could have been even worse than that), while his children would be in the custody of social services.

Had this designer not challenged the experts, he would still be an undiagnosed coeliac who was 77x more likely to get lymphoma and who would be taking PPI’s and back pain medication every day for the rest of his short life – just like his mother who died from lymphoma at 54 after years of taking antacids, PPI’s and heavy pain medication for her back through most of her adult life.

Had this graphic designer not challenged the experts, his bother would still have large bald patches all over his head and beard from his cold sore medication.

Had this designer not had a healthy understanding of evolution and physics, he likely would have never believed any of that stuff. I only bring that up because you mentioned sky gods and fairies… Evolution is the science that will convince you most that sky fairies don’t exist. Its also the science that once you get past the basics will show you why GMO’s are potentially dangerous, why novel compounds should be treated first and foremost as poisonous, why breast milk is better than pharma milk, why vaccines may not be good for the human race in the long run, why industrial farming is a complete disaster of potentially apocalyptic scale and why most chemists have completely lost their marbles.

In other words… Sense about Science won’t touch evolution with a barge pole – and Dawkins won’t touch medicine with a barge pole. Why? Because when you get down to brass tacks, they are not very compatible.

Evolution should only be used to bash the creationists….just don’t read any further than the prologue.

Somebody needs to ask this question of the debaters: since many of you challenge and discredit scientific authority on instinct, how do you distinguish between harmful frauds and helpful therapies? Where the heck is your EVIDENCE! Or doesn’t evidence matter to you? Anecdotes DO NOT COUNT, people! We can’t generalize from them.

Before anybody pipes up with the time-worn assertion that we can’t know the evidence because “somebody” (never named, of course, even if attributed by general category) has suppressed it in a conspiracy… there’s an old but quite appropriate observation to take on board. “Never attribute to conspiracy that which is credible as a simple consequence of simple human cussedness.”

Ultimately this discussion is puerile without evidence. So quote your independently validated references. Or go home and talk to yourselves.

Richard

“Anecdotes” most certainly do count. In the old days when your Xmas tree lights were old style bulbs – every year they wdn’t work when taken down. You’d unscrew each bulb in turn till the lights came on – and then throw away the dud bulb. This is an n of 1 trial – the highest level of evidence in evidence based medicine – an anecdote.

The original accounts of people becoming suicidal on Prozac were worth far more than the clinical trials done which claimed the science showed no problem – a position FDA colluded with. The reason trials didn’t show a problem is because the company manipulated the data as did all of the other SSRI companies. And the scientific publications were all company or ghost-written. So where is your evidence? Or doesn’t evidence matter to you?

Even if the data weren’t manipulated and inaccessible and the trials ghost written, RCTs systematically get the wrong answer every time a drug and illness produces the same symptom – as in suicide. How do you solve this? How do you distinguish harmful frauds and helpful therapies in this case?

You solve it by being rational – not by having recourse to some automated controlled trial procedure. You also solve it as many of the people here have had to do by not turning to the authorities – experts, regulators and companies – who in the case of most drug induced adverse events appear pretty compromised. The fraud action against GSK and $3 billion fine was for standard – not exceptional practice.

If you want to read Pharmageddon and Let Them Eat Prozac you will see them stuffed full of evidence that supports the kind of points John Horgan and many of the commentators here make.

There is a much earlier blog – over two years ago – that gives my version of what is going on that I invite you to read – The Factories of Post-Modernism – or alternately the last chapter of Pharmageddon

David

How about decades of hands-on experience? Does that count for nothing?

I fixed up the National Geo cover to make it a little more honest.

https://onedrive.live.com/redir?resid=2F29AC6DD90D4F9A!5226&authkey=!AM2QtDvbISbxl7s&v=3&ithint=photo%2cjpg

*Share* *Scam* *Alert*

http://www.gsk.com/en-gb/investors/shareholder-information/share-scam-alert/

Pot, kettle, black, is a *Boiler*

*Many victims have been successfully *ingesting* for several years*

*Doctors* are advised to be wary of any solicited advice*

*If one of these *doctors* contacts you by phone, it is best to hang up*

*These *brokers* can be very persistent and extremely persuasive about fraud with victims losing an average of £20,000.

Is that all?

Life?

Meaningless.

Just a little example as to how one can bend language and suit it to any situation one comes across…

We have a little *graphic designer* whose job is to *handle* the GSK website. http://www.gsk.com/en-gb/our-stories/how-we-do-randd/data-transparency/

He is not doing it well because he is constantly opening, not only himself, but, his company, to crude analysis………..

My advice would be less about Data Transparency and less about Paroxetine, paraded in front of us and just comment on interesting things like the New Chairman joining forces on the same day as the AGM…………..nothing like a bad share price to make this chap jump……….

Richard

Do “most of us challenge authority on instinct” as you claim. At least in this instance I began by accepting authority, and then by a very slow and painful process came to realise that my family had been betrayed, and many others too.

I have also provided a lot of information for anyone who is interested.

But even if this was not case anybody who has a reaction to a pharmaceutical product is owed sympathetic monitoring and investigation – that is how ethical medicine would work if we had it.

John Horgan has a further blog on this topic = which is now included in the original post and reproduced here

I recently knocked science journalist Chris Mooney for asserting that “You Have No Business Challenging Scientific Experts.” Non-experts have the right and even the duty, I retorted, to question scientific experts, who often get things wrong.

Far from reconsidering his stance, Mooney doubles down on it in a Washington Post column, “The science of why you really should listen to science and experts,” that defends not just scientific experts but experts in general. Mooney ends up not boosting experts’ credibility but undermining his own.

He cites a study that found that judges and other lawyers show less ideological bias—or “identity-protective cognition”–in their application of the law than law students and lay people. Titled “Ideology’ or ‘Situation Sense’? An Experimental Investigation of Motivated Reasoning and Professional Judgment,” the study was carried out by Yale law and psychology professor Dan Kahan and five other scholars.

To my mind, the study merely shows that lawyers and judges know the law better than law students and non-lawyers. That’s reassuring, but surely it does not mean we should always trust lawyers’ legal advice, especially since lawyers so often disagree on interpretations of the law. Consider the rancor of recent debates on health care, immigration, taxes, the environment and other issues in Washington, where more than one third of current Representatives and one half of Senators have law degrees, according to The National Law Journal.

Mooney nonetheless insists that the Kahan study “fits nicely alongside a growing trend toward robustly defending and reaffirming the importance of experts.” As an example of this trend, he cites the 2005 book Expert Political Judgment by political psychologist Philip Tetlock.

Mooney’s citation of Tetlock is bizarre, because Expert Political Judgment—far from a defense of experts—is a devastating critique of them. Tetlock reports on his long-term study of 284 professional pundits, including academics, government officials and journalists, who comment on politics and related issues in scholarly journals and conferences and via mass media. Over two decades, Tetlock recorded some 28,000 predictions by the experts related to wars, elections, economic collapses and other events.

In a terrific 2005 review, “Everybody’s An Expert,” New Yorker writer Louis Menand summarizes Tetlock’s conclusions as follows:

“people who make prediction their business—people who appear as experts on television, get quoted in newspaper articles, advise governments and businesses, and participate in punditry roundtables—are no better than the rest of us. When they’re wrong, they’re rarely held accountable, and they rarely admit it, either. They insist that they were just off on timing, or blindsided by an improbable event, or almost right, or wrong for the right reasons. They have the same repertoire of self-justifications that everyone has, and are no more inclined than anyone else to revise their beliefs about the way the world works, or ought to work, just because they made a mistake. No one is paying you for your gratuitous opinions about other people, but the experts are being paid, and Tetlock claims that the better known and more frequently quoted they are, the less reliable their guesses about the future are likely to be. The accuracy of an expert’s predictions actually has an inverse relationship to his or her self-confidence, renown, and, beyond a certain point, depth of knowledge. People who follow current events by reading the papers and newsmagazines regularly can guess what is likely to happen about as accurately as the specialists whom the papers quote.”

Menand’s review is loaded with gleeful one-liners, including this one: “Human beings who spend their lives studying the state of the world, in other words, are poorer forecasters than dart-throwing monkeys.” And yet this is no laughing matter. Consider how “experts” in the government, academia and media helped enable the catastrophic U.S. wars in Afghanistan and Iraq and the economic collapse of 2008. Example: New York Times columnist Thomas Friedman, who just before the U.S. invasion of Iraq expressed the hope that it would lead to “a more accountable, progressive and democratizing regime.”

How can Mooney possibly interpret Tetlock’s book as a defense of experts? Here’s how. He seizes on Tetlock’s finding that some experts were better forecasters than others. They tended to be not what Tetlock calls “hedgehogs,” who explain the world in terms of one big unified theory, but “foxes.” Foxes, Tetlock explains, “are skeptical of grand schemes,” and “diffident about their own forecasting prowess.”

In other words, the most credible experts are those who, implicitly, warn us to be wary of experts. Mooney is oblivious to this irony. “So experts really do exist,” he blithely concludes, “and they really are different from non-experts. Now, all we have to do is listen to them.”

I prefer Menand’s conclusion. He writes that “the best lesson of Tetlock’s book may be the one that he seems most reluctant to draw: Think for yourself.”

I have always thought along similar lines albeit with a much less sophisticated approach.

Regardless of the odds, either someone considered an expert or I can be wrong about a particular matter. Sometimes it’s both of us. But certainly as far as health care goes, if my doctor is wrong – I pay the consequences; if I’m wrong – I pay the consequences.

So until that changes, I’ll keep thinking for myself and suffer societies condemnation as a fool for it.

This discussion has grown long and somewhat circular. And I think many of us are talking past each other. It seems to me that two central questions are being missed: given the high level of skepticism of medical treatments which is represented here, should any of us ever see a doctor when we feel ill? And if we do, then how do we reliably distinguish between the genuine healers and the dangerous quacks? How do we generalize from the reports offered to something that might resemble a standard of effective care?

Regards,

I don’t find it all that complicated or that black and white.

“Should any of us ever see a doctor when we feel ill?”

Why wouldn’t we? Doctors are as much a source of information as anywhere else. Presumably better but not necessarily. But I will say that I don’t intend to do surgery on myself ever.

“How do we reliably distinguish between the genuine healers and the dangerous quacks?”

Using the information you can obtain from a multitude of sources and the skepticism you appear to disparage. Not every doctor is a quack but I assure you that not a single one of them is perfect.

“How do we generalize from the reports offered to something that might resemble a standard of effective care?”

I don’t want to abandon scientific research; what I want to abandon is the one-size fits all medicine, and particularly dietary advice, when I find it is not working for me. Also, when reports come in from multiple people, physicians and researchers may want to pay attention to the after-market clinical trial going on right in front of them before assuming a patient has no idea what they are talking about.

A lot of things would be done differently in an ethically sound medical services system. Pharmaceutical advertising would be banned legally from public media in the US and NZ. All trials data and notes would be documented under supervision and published for independent analysis. Replication studies would be funded by public money. Adverse reaction reports would be reported to and validated by bodies independent from drug companies. Courts would recognize the validity of class action suits challenging not malpractice, but standards of care that are founded on unproven assertions or outright fraud.

But we don’t live in that world yet, and we may never live in it if the “critics” simply criticize and fuss and fulminate instead of getting organized and applying the methods of science to understanding medical evidence; then lobbying for legal remedies in the courts as well as the press.

One anecdotal case of bad reactions to a medication can be a catastrophe for the person who goes through it and for their family.

A hundred anecdotal cases are both a catastrophe and an indicator of issues that need to be explored and resolved by somebody who isn’t financially (or perhaps even professionally) self-interested.

Thousands of anecdotal cases supported by medical case reports should be grounds for suing somebody out of their positions of authority and privilege.

The place where I fault this blog as well as Mad in America and a number of similar venues, is that all too often you never get past the rhetoric of criticism to any kind of organized program for fixing the bloody problem! Forgive me if I doubt that you’re serious until you do.

Sincerely,

A lot of the people contributing here have taken legal actions. Some of us have lost jobs as a result of putting forward our point of view. Some of us have set up RxISK.org as a way to solve the problem. One of us has analyzed the problem in considerable depth in a series of books.

You don’t seem to have done any research on the people you are throwing jibes at

David

Dear Richard Lawhern.

You will not see the benefit of knowing a side-effect upfront until you’ve experienced one. (As opposed to many of the commenters on this blog)

Have you asked yourself what your “statistical proposal” means to those poor 999 individuals that has to live through a possible side-effect Before they could get acknowledgement for it?

Please give me some advice to what I should do, I have experienced several “events” that thousands of other people has reported as “side-effects”?

Is it somewhere OK, in your opinion, that Big Pharma takes to extreme measures not to accept certain sideeffects just because they are not replicable in 8-week trials? (Because I feel that an 8-week controlled trial is somewhat less extensive than my 15 years on the medication…)

David,

Whilst some may think John Horgan adopts a reasonable stance he makes the same mistakes about science as those he criticises. All that is in his favour is that his mistakes are repeated daily by even those who are working scientists by profession and by the vast multitude beyond them.

Horgan assumes throughout there is a one-size-fits-all brand of “science” and that all the knowledge it delivers is capable of being reliable even if it is not always so. Horgan acknowledges sometimes even “authoritative” scientists are wrong. But his lack of understanding of what it is he covers by indiscriminate use of the label “science” makes for some cringeing schoolboy howlers like “Science is a uniquely potent method for discovering how nature works”. 400 years ago Sir Francis Bacon in Novum Organon recognised that was not correct writing in 1620:

“XXXVII. Our method and that of the sceptics’ agree in some respects at first setting out, but differ most widely, and are completely opposed to each other in their conclusion; for they roundly assert that nothing can be known; we, that but a small part of nature can be known, …” [1].

The problem is we have been sold a conceptual pup, a pig in a poke. From the earliest time any of us are taught science in schools what is in the bag labelled “science” is not what we are told it is. The problem is people like Horgan have never looked properly in the bag if they have looked at all to see what it is that is really there – like the buyer of pig in a poke, it is not a pig they have been given in the bag but a pup.

There is no one flavour of “science”, there is no one scientific method and the vast preponderance of knowledge delivered by what we lazily refer to under the umbrella term “science” is not reliable and indeed not even scientific if we judge it by what we think ought to qualify.

When executing a commission to write two peer reviewed papers about the nature of scientific and other knowledge I found the task an eye-opener despite the benefit of having read a graduate degree in physics. The fundaments of what we call “science” do not match the descriptions propounded by educators to students in schools and universities nor in journal papers or the media.

Modern physical science and its successes in technological advances are built on a foundation of inquiry by reductionism. Whilst some mathematicians knew over a hundred years ago and possibly much earlier, it seems it is only now that some scientists are catching up and realising how reductionism fails [and dramatically so] when faced with complexity.

Complexity is why doctors cannot be scientific in the diagnosis and the treatment of patients. But this goes wider and deeper affecting all spheres of human knowledge and endeavour. Medicine is one example. The principles and the issues are of more general applicability. Complexity is why meteorologists can never succeed in predicting the weather reliably nor economists in formulating accurate predictions of the behaviour of economies nor psychologists in predicting an individual’s behaviour.

The successes of the exact sciences in modern western societies have been used to create an impression that we can know and predict everything about nature and more when the truth is far from that conception. Much more is known in modern psychology today about how “the mind” works than 30 years ago but it is when we look to the inexact sciences that we find an array of theories which are falsified in the Popperian sense by the very experiments by which the theories gain credence. Psychology’s successes are built upon inexact theories which cannot be used to predict reliably and certainly not in the way successful repeatedly verified theories in the exact sciences can be.

And how exact a “science” is physics? In astronomy and space physics the classical reductionist experiments upon which physics’ classification as an exact science is founded are impossible. Theories cannot be tested by experiment. We cannot put a planetary system nor a galaxy in a laboratory. Meteorology, cosmology, geology are all sciences in which reductionist experiments are impossible.

But after examining these kinds of limitations it is when complexity presents itself that science fails. The conventional view of what “science” is does not work in the real world for providing knowledge about how to handle complex systems – which include the human body – and much more besides.

Complexity dictates why doctors can only work as they do and why they cannot be “scientific” when they do it – but they can use knowledge gained by science in what they do to inform their expert intuition and judgement gained by experience of treating many patients over many years.

What some may find surprising in particular is the realisation that despite the success of reductionism in the exact sciences, beyond simple linear systems, for everything else we are left to educated guesswork about what might happen in all areas. Expert judgement and intuition based on long experience is indispensible to the practice of many professions and callings.

We cannot use science to predict reliably what will happen in a complex world even if the we see the same things happening over and over. Our solar system, which everyone used to think is highly regular, could behave irregularly and possibly chaotically with planets deviating substantially from what were thought reliably predictable orbits.

If you want your conventional “consensus” conception of science realigned to match the unforgiving truth, the first of the commissioned papers is entitled “Medicine is not Science” [2]. This led to the second entitled “Medicine is Not Science: Guessing the Future, Predicting the Past” [3].

These papers are the only ones I and my co-author know of which addressed the issues. The papers cannot be posted online but anyone interested can be provided a personal copy if they contact me. Both were presented at a conference in Madrid in July last year. The powerpoint can be downloaded from the link here [4] and an abstract which introduces Knowledge-Based Medicine here [5].

Neither of the papers are based on anything other than existing knowledge. It is the analysis and explanation which is new as if no one had thought to question any of it before. If anyone does find something similar I should be most grateful to know.

[1] Bacon, Lord F. (1620). Novum Organon or True Suggestions For The Interpretation Of Nature.[(1902) English translation ed. by Joseph Devey]. New York: P.F. Collier & Son.

[2] Miller, C. & Miller, D. (2014) Medicine is Not Science EJPCH 2 (2)

[3] Miller, C (2014) Medicine is Not Science, Guessing the Future, Predicting the Past JECP 20:6, 865–871

[4] https://www.researchgate.net/profile/Clifford_Miller/publication/264235962_Medicine_is_not_science_Guessing_the_future_predicting_the_past._Introducing_Knowledge-based_Medicine/links/53d3ca3d0cf220632f3ce74e.pdf

[5] https://www.researchgate.net/publication/264235962_Medicine_is_not_science_Guessing_the_future_predicting_the_past._Introducing_Knowledge-based_Medicine

Clifford

Thanks for this. I think John Horgan is much closer to you than might appear on the surface – its just rare to attempt to grapple with the issues you lay out here in a blog post

David

Think for yourself

Philosopher and author AC Grayling on thinking critically and being a well-informed citizen of the world.

https://richarddawkins.net/2009/12/a-c-grayling-the-unconsidered-life-3/

Ironically from the former University of Oxford Professor for Public Understanding of Science… It seems science has changed it’s message to the public.

Its only 2mins long

Richard

How about if you’re living the bloody evidence.

I saw my son turn into a paranoid psychotic mess within the week of stopping venlafaxine. He did not take the drug for depression, he was prescribed off label for a neurological condition, so it was not a return of depression.

He is now facing a prison sentence. A young man who has never been in trouble, never so much as been arrested or even a caution. Never been violent.

I have never seen anything like it in my life. This has ruined his and my life.

So I may not be be scientific or academic, I can’t reel off lots of facts and jargon, I just live it and watch my 20 year old Son’s life go down the tubes.

That’s my evidence.

Lisa, I have just found your comment about your son. It is now 2 years later, and I so hope he has not become even more unwell. Your comment caught my attention because my son, aged 32, who took Venlafaxine also for a reason that was not depression, it was RoAccutane (acne drug) triggered brain damage, although this was not understood till after his death. A psychiatrist, who he only met twice, for about a hour on each occasion, suggested he stop the Venlafaxine immediately (no suggestion about the advisability of tailing off). He too had never been violent, was law abiding, kindness itself etc etc. He changed for the much worse within 2 days. Within 2 months he had taken his own life, convinced the world would be better off without him. He took his scientific evidence from the psychiatrist who predicted, loudly and insensitively that because of his expressed suicidal feelings on stopping Venlafaxine, he probably would take his own life, but even so, he was being denied further Home Treatment as he had “not co-operated’ presumably because he was saying how ill he felt and this, in the psychiatrist’s mind, did not fit the evidence ie that stopping this drug so quickly could cause akathisia, and irrational suicidal thoughts.

Today I have attended an NHS Wellbeing Event where many service providers set out their stalls and explained to the public what ways to Wellbeing they could provide. Having set up a charity in our son’s name, on our stall we were offering creative courses in a compassionate and understanding peaceful countryside environment for anyone suffering anxiety or depression. When asked, we explained to people what had happened to our son, several being themselves professional service providers locally, I was horrified, though sadly unsurprised, to learn that that same psychiatrist had ruined many other lives besides our son’s (and inadvertently of course, ours). The man has just retired, within the last few weeks. All those who commented having known him, some having worked with him, said thank heavens future patients have been saved from him. So where does this fit with DH’s post re questioning scientific evidence. We did, in polite suggestion to the psychiatrist before our son died but he told us we were talking nonsense. He believed stopping Venlafaxine suddenly could not cause the symptoms our son was manifesting. Yet it was ‘anecdotally’ obvious to us that they were. Like DH says, many of us have tried to get our voices heard, but can’t seem to make much difference. I do so hope your son is improving, and I thank you for the evidence you’ve shown us, by writing your comment, that it’s likely our impressions were correct.

I don’t think David Healy, Neal Carlin or anyone else with RxISK wants to rely solely on “anecdotes” and throw experimental evidence in the dumpster. Neither does John Horgan. But we do have a few problems with Richard Lawhern’s proposal (and that of Horgan’s student of science journalism) that we solve our problem with an appeal to “the evidence.” Here’s one:

There is a BIG difference between “scientific evidence” and “articles in peer-reviewed journals.” Especially when it comes to medicine. And the key problem is exactly what Richard pointed to: reproduceability. When you make a scientific claim, you are supposed to lay bare ALL your findings, and ALL your methods for arriving at them, so that other scientists can examine them and try to duplicate your results. If you don’t do that, said my high-school biology teacher, IT AIN’T SCIENCE.

Yet that standard has been utterly abandoned in modern medicine, where it’s become routine for the financial sponsors of research to assert that they “own” the results and don’t even have to share them with regulators, let alone with people who doubt their findings. As a result, an analysis of all the randomized controlled trials of a given treatment may or may not get you any closer to “the facts” about its usefulness or safety. It may just be garbage in, garbage out.

It’s not just a few conspiracy freaks who think this, but a growing roster of senior scientific experts which includes current and former editors of the New England Journal of Medicine and the BMJ. Here’s a good example of why:

A study just released by Northwestern Med School found a small but significant number of men who’d used Finasteride for baldness suffering severe sexual side effects. It also found a total lack of useful evidence in the published trial literature that would help doctors or patients decide if Finasteride was worth the risk. http://www.feinberg.northwestern.edu/news/2015/04/Belknap-baldness-safety.html

Moreover, they had no clue whether the authors had simply not found this evidence because they failed to look for it, or had in fact found the evidence and suppressed it. No one can say – because the raw results from these “scientific” studies have not been shared with anyone! Thirty or forty years ago that might have been shocking. Now it’s the rule, not the exception.

It’s common for people who see these facts for the first time to think there must be a quick fix. Surely this is illegal! Why don’t you talk to your senator? Draft a law, and get him or her to sponsor it? Why don’t you bring this up in your professional societies? Why don’t you call the New York Times, or get on the Oprah show? There are procedures, in a democracy, to bring bad actors like this to justice. Use them! Don’t just rant and rave on your pathetic little website.

It’s only when you start trying to do exactly this, that you discover how many forces are ranged against you. It’s much like trying to reform the banking system or the stock market. A lot of things that are illegal, and others that clearly ought to be, are also very profitable. And with wealth, goes power.

The fight for basic access to “the evidence” already spans continents, and involves hundreds of scientists and thousands of lay activists. If our accomplishments are awful damn modest to date, it ain’t because we haven’t tried all the conventional methods of political and professional influence. It’s because we’re up against Goliath, for real. Hope you’ll join us.

Lisa, this is an appalling and not untypical situation in which you find yourself.

If Rxisk shows up Venlafaxine to have violent side-effects it could be debated in a court room that the law of probabilities is that Venlafaxine caused your son to commit an aggressive act.

Presumably his doctor would argue that he gave this drug to your son in ‘blind faith’.

Do we criticise the doctor?

Do we suggest to your doctor he should be more aware of risk even though he may not have experienced such a reaction before with any one else?

Do we maintain that doctors who do not describe fully or even mention the possibility of possible discontinuation symptoms are not doing their job properly?

The doctor is the go-between, piggy in the middle and the rights of patients to seek redress when it all goes wrong should be a critical part of the medical system and acknowledged as such.

I am just one more ‘anecdote’ but I am hopeful that the thousands of ‘anecdotes*’ from swallowing Venlafaxine or Paroxetine or Fluoxetine or anything else will be a support for you in these months ahead.

The Burden of Proof is your burden

http://uk.practicallaw.com/2-500-6576?service=ld

Stick to your guns…there are and always will be ugly people who only have their own interests at heart.

Annie

Thank you Annie. We are fighting it all the way for him. His Doctors have been very supportive they just did not realise that venlafaxine could cause such severe withdrawal.

It’s very stressful I had to try and cope with the fact that he was diagnosed with an incurable neurological condition that severely impedes his life and now this.

It breaks my heart to see my Parents who are pensioners and have never been in a court in their lives sitting watching their grandson locked in a perspex box. I don’t sleep, I had to leave my job. We were a normal family just trying to get through life and when he was diagnosed I thought that his diagnosis was the worst thing that could happen, I had no idea that it could get worse than that. I can’t even describe properly what it’s like…. because what’s happened is so surreal and out of context with our lives that I struggle to believe it’s real.

And before anyone decides to pipe up that venlafaxine works for them, I realise that .I’m not saying that prescription drugs should be banned. I’m saying that if we had been aware of how severe the effects of withdrawal could be things may have been different. With hindsight there were several red flags in the days leading up to the event but none of us acted on them because we didn’t know they meant anything.

He is still on a lot of prescription drugs, he has to be to try and live the best life he can but now we are more informed and aware. Some of the drugs are helping him, some haven’t. It’s trial and error but at least now we are aware the errors can be minimised and managed.

One of the things that really narks me is that if you dare speak up and say anything about pharmaceutical companies and prescription drugs you are treated as if you are some sort of hippy dippy fanatic who wants all drugs and treatments banned. Why does wanting the information to make informed decisions about what you put into your body mean that to the majority ?

The other is people like Richard who obviously has no knowledge of David Healy making comments ” forgive me if I doubt you are serious until you do ”

Are you serious Richard ??

Forgive me if I think you should “go home and talk to yourself”

The Mooney problem is not of course that he’s mistaken (which goes without saying), the problem is with what he does which is to manipulate and isolate: it is about crowd control and compliance. He’s the sheep dog. Looking back Horgan half gets the problem that Mooney is in a paradoxical and self-negating position (as a non-expert) but he accords him too much respect. Secondly, he falls for the Jenny McCarthy trap ie Horgan’s opinion and doubts about vaccines are so much more important than Jenny’s, but of course Jenny is the evidence. If you don’t run after her other people might speak up too. It happened at the end of 2013 with Katie Couric as well. She ran a programme on girls who had suffered ill-effects of HPV vaccines. There was a massive storm round this very experienced newsperson, people writing about their problems to her blog were swamped with vicious attacks for self-styled “sceptics” (there were over 12,000 comments), she was made to eat-humble pie, do another program in which the HPV vaccine proponents had it all there own way, and her career was more or less wrecked anyway.

This morning in California you can watch every mainstream media outlet baying to have the vaccine mandates made compulsory for all schoolchildren – this is not because they have considered all the ramifications of an endless extending schedule, the toxic ingredients, the limitations of the knowledge, the corrupt nature of public agencies, it is because they are paid to, or because they themselves are intimidated. This is not to do with science it is to do with penning people in for financial gain, and the creation of an ideology in order to achieve this.

Of great interest I think is this report by Kent Heckenlively of Robert Kennedy Jr’s address to California’s Commonwealth Club a couple of days ago:

http://www.ageofautism.com/2015/04/kennedy-at-the-commonwealth-club-and-the-fight-for-california.html

“..In discussing how this came to happen, he cited the removal of vaccines from the civil justice system by the creation of the Vaccine Court in 1986, and the vast amount of money spent by the pharmaceutical industry in the political process (twice that of oil companies), and among the news networks (sometimes 70% of their advertising revenues). The lawyers have been taken out of the process, the regulatory agencies, the politicians, and the press neutralized.

“Some interesting information was revealed by Kennedy about CDC whistleblower, Dr. William Thompson. Thompson invoked federal whistleblower status this summer and hired leading whistleblower attorney, Morgan VerKamp, to represent him on his claim that his superiors had required him to lie for the past ten years about the connection between vaccines and autism. He has turned over tens of thousands of pages of documents to Congress and has “asked” to be subpoenaed by Congress. The matter is currently before Congressman Jason Chaffetz’s Oversight committee. Kennedy related how the chief of staff of Chaffetz’s office had said that these documents, when they are released, “are not just a smoking gun, but a wildfire that will burn CDC to its foundations.”

“Kennedy talked of how he had been present when his family started what became known as “Special Olympics” and they never saw a child with autism. He talked about how the severe problems of these children cause their parents to essentially “disappear” from society and political discourse because they are just trying to get through the day managing their lives and their children. To say that this problem was missed in previous generations was ‘like missing a train wreck.” He recalled how he had been in Utah the previous night, which has both the highest vaccination rate and the highest autism rate, 1 out of every 39 kids…”

John, on the subject of HPV vaccination, another review has recently been published in The Lancet Infectious Diseases supporting this intervention: “Population-level impact and herd effects following human papillomavirus vaccination programmes: a systematic review and meta-analysis”, published online 2 March 2015: http://www.thelancet.com/journals/laninf/article/PIIS1473-3099%2814%2971073-4/fulltext

The abstract includes this interpretation: “Our results are promising for the long-term population-level effects of HPV vaccination programmes. However, continued monitoring is essential to identify any signals of potential waning efficacy or type-replacement.”

I wonder how many children and their parents are being properly informed of the possibility of waning efficacy or type-replacement with the use of HPV vaccines, and the implications this may have? It is my strong suspicion that in many instances valid ‘informed consent’ is not being properly obtained before this medical intervention.

It is notable that the review is behind the paywall of The Lancet Infectious Diseases, i.e. it can be purchased for $31.50 USD. I suggest it is highly problematic that papers which promote the use of vaccine products are not open access, i.e. easily accessible for public perusal.

There’s also commentary in The Lancet Infectious Diseases on this review: “Greatest effect of HPV vaccination from school-based programmes”: http://www.thelancet.com/journals/laninf/article/PIIS1473-3099%2815%2970078-2/fulltext

Again, it’s behind the paywall…. For interested citizens who do not have the privilege of institutional access, this will mean a time-consuming visit to a university library to try and access the paper there, or another $31.50 USD for the coffers of The Lancet Infectious Diseases, kerching…

One of the authors of this review is Julia Brotherton. This person has been involved in the promotion of HPV vaccination in Australia for some years, at least since 2003. See for example: “Planning for human papillomavirus vaccines in Australia. Report of a research group meeting.” CDI Vol 28 No. 2 2004: http://www.health.gov.au/internet/main/publishing.nsf/Content/cda-pubs-cdi-2004-cdi2802-htm-cdi2802p.htm

In the acknowledgements of this report published in 2004 it is noted: “We would like to thank CSL Pharmaceuticals and GlaxoSmithKline for their support in facilitating this meeting…”

Julia Brotherton, and the other author of the report, Peter McIntyre, currently an ex officio member of the Australian Technical Advisory Group on Immunisation and have been associated with CSL and GSK for some time.

It really concerns me that people such as Julia Brotherton, who have associations with industry, and who may also have an ideological and career interest in ‘proving’ the benefits of HPV vaccination, are also the ones evaluating the effectiveness of HPV vaccination. Personally, I have no confidence in their objectivity on this matter.

I’ve also become very cynical about the often industry-associated ‘peer-reviewed literature’. Even The Lancet’s editor, Richard Horton, has confessed that: “Journals have devolved into information laundering operations for the pharmaceutical industry.” (As quoted in Richard Smith’s essay “Medical Journals Are an Extension of the Marketing Arm of Pharmaceutical Companies” PLOS Medicine 17 May 2005: http://journals.plos.org/plosmedicine/article?id=10.1371/journal.pmed.0020138 )

Cervical cancer and other HPV cancers only kill about 20,000-30,000 Americans a year. The vaccines, even if universal, would not prevent all of those deaths. HPV can be prevented by other means, which should be obvious without my stating them. Injecting 320,000,000 people with a poorly-understood vaccine to prevent and infection that rarely leads to cancer or death seems to be overkill, perhaps literally.

(Of course, non-lethal cases of Cervical and similar cancers are more numerous then lethal ones, and treatment can be disabling and life-disrupting, so those cases should be taken into account alongside deaths when evaluating the need for the vaccines.)

But still, I think people who manage to limit their exposure to HPV have a better chance at good health than do those who use the vaccine and do not alter their behavior.

Early detection is a very good tool, but the CDC has been advising women to get tested for dysplasia and cancer less often then ever before. Last I heard it was every three years for someone whose last test was negative. To me that is ridiculous. Getting infected the day after a negative test would mean three years for dysplasia to develop without detection, during which it could advance into cancer.

My own oncologist at a fancy cancer center told me (in 2009) that there was no evidence that the vaccines prevent edcancer at that time, anyway. (Maybe more is known by now.)

Another reason to scrutinize HPV vaccines is that not all squamous cell cancers of the cervix, head and neck, anus, etc., reveal HPV, and not all cases of HPV result in cancer. The vast majority do not.

So, though HPV is associated with certain cancers, it is hard to say it causes them, given that it is neither necessary nor sufficient to bring about the squamous cell cancers it is correlated with.

Of course this is only anecdotal, but nuns , virgins and Jewish women (with Jewish male partners) are unlikely to get cervical cancer. I wonder why.

Correction to previous post: I just found out I was wrong about the number of deaths. CDC puts the number of probable HPV cancer CASES, not deaths, at 26,000 year.

http://www.cdc.gov/cancer/hpv/statistics/cases.htm

I think people like Horton and Smith and Godlee (Smith’s successor at BMJ) try and get street cred by admitting there is problem but it does not mean they are not up to their ears in it themselves.

During my independent layperson’s investigations into vaccine products, I’ve been struck by the often arrogant, patronising and dogmatic attitudes of people with a science background I’ve come across. It seems to me there is a religious fervor by some people wedded to their ‘scientific’ views on vaccination, and they will brook no dissent.

It’s interesting to contrast the assuredness of vaccination zealots with others less certain of the status quo….

Consider for example the attitude of the late journalist Christopher Hitchens in his interview with Jennifer Byrne about his memoir “Hitch 22”, undertaken in 2010.

During the interview Hitchens described his concept of “Hitch 22”: “Hitch 22 for me is, that having been involved in various kinds of quite solid commitment and allegiance throughout a lot of my life, been through it and out the other side, that now the only group I’m associated with is a loosely knit collection of people – Richard Dawkins is the best known member of the founding group. The meeting of this group was held in my house, there’s a photograph of it in the book – Sam Harris, Dan Dennett, people who…want to defend science and reason, and who say that the main principles are that the only thing you’re sure of is uncertainty; that the only thing that is certain is doubt; that the main thing is the Socratic principle…you’re only educated when you understand how ignorant you are. So all assertions of faith, or absolutism, or complete belief are, almost by definition, useless and false… And that actually, that’s quite a strong commitment to be making…to…a party of doubt and uncertainty, open-mindedness and scepticism. I feel I can spend the rest of my life doing that with a fair degree of conviction.”(1)

Hitchens refers to Richard Dawkins in the interview. An internet search indicates Dawkins is an avid supporter of the ‘science’ of vaccination, see for example: “Stop the Anti-Vaccine Gospel”: https://richarddawkins.net/2013/09/stop-the-anti-vaccine-gospel/

I presume Dawkins puts his faith in the industry-funded scientific community on this matter. I wonder if he has ever critically examined any individual vaccine products, e.g. the arbitrary second dose of live measles/mumps/rubella vaccine; the questionable effectiveness of the pertussis vaccine and annual flu vaccines; and the dubious international fast-tracking of HPV vaccination?

Ref 1:

The interview with Christopher Hitchens is currently on YouTube. The discussion about “Hitch 22” starts at 05.44 in Part 2. It’s a great interview, worth watching:

Jennifer Byrne Presents: Christopher Hitchens

Pt. 1 http://www.youtube.com/watch?NR=1&feature=endscreen&v=1yuSMBrOSGs

Pt. 2 http://www.youtube.com/watch?v=81FcA4PlMus&feature=related

Pt. 3 http://www.youtube.com/watch?v=sWV_CJ1WU78&feature=related

No human being is constituted to know the truth, the whole truth, and nothing but the truth; and even the best of men must be content with fragments, with partial glimpses, never the full fruition.

William Osler

There’s nothing new…….

These are the people I followed for years. Dawkins sucked me in and is in fact responsible for a lot of my interest in science. I read all his books, and Hitchens, but I kept coming across these conflicts and inconsistencies that when I applied the very methods Dawkins taught, against much of his own assertions, they just didn’t hold up.

It almost made me write him a letter to ask if I was missing something or if he was missing something. I didn’t do it because I thought ‘ who was I to question the great Dawkins of Oxford’. Shortly after someone lobbed a hand Grenade into my home in the shape of venlafaxine, which kept me busy for the next while.

He stays out of the deeper medical questions for the most part as far as I can see. He is a defender of science, but its orthodoxy that he defends, so when pressed he will side with vaccines, antidepressants or whatever, but never with any real depth.

Oddly enough Dawkins had MMR proponent Michael Fitzpatrick (trustee of Sense About Science) on a BBC documentary as if belief MMR was a foundation of a modern secularist’s view of the universe and quite amusingly Fitzpatrick subsequently turned on Dawkins because he did not like his dogmatic view on religion.

Hahaha, made my day. In my experience (but sadly only of others) too much praise and “success” can be very harmful to people. Only a minority can maintain their critical self-doubt under its ego-bloating pressure. I consequently try to do my thankless bit by slagging off anyone who makes a mistake.